Platform

Microsoft Copilot Studio

Data Layer

Microsoft Dataverse

Use Case

Record Management & Audit

Status

✅ Complete

Imagine you’re managing a team who support Power Platform across your organisation. A handful of individuals are responsible for making Dataverse changes on everyone else’s behalf — and the requests keep coming. It’s unsustainable, it creates a bottleneck, and it takes power away from the very people you’re trying to enable. In a support environment bound by SLAs, that dependency isn’t just frustrating — it can be genuinely time-critical.

But there’s a second problem that’s easier to overlook: audit trail, accountability, and governance. When a Dataverse record is changed, is that change logged? Is there a clear record of why it was made, and who requested it? If someone reviewed the audit history of a record and saw that you made a change you can’t explain — because you make dozens of changes a day — how would that look? In regulated industries or environments with strict governance requirements, that’s not a hypothetical risk. It’s a real one.

That’s exactly the scenario I found myself in. And it’s what led me to build a CoPilot Studio agent that removes individual dependency, executes changes in a structured and reliable way, and automatically records the reason for every action directly onto the Dataverse record’s timeline — creating a clear, traceable audit entry every single time.

The agent’s story actually begins back in 2025, when in its infancy it was a straightforward knowledge agent. Ask it a question, and it would search our SharePoint site for the answer. Simple — but effective. Early feedback from the team was genuinely positive; it was surfacing answers to process questions far faster than waiting on me, and it gave the team a degree of independence they didn’t have before.

That said, a knowledge agent has its limits. It relies entirely on the knowledge source being accurate and up to date, and critically, it can’t do anything — it can only tell you things. That limitation is what drove the next phase of development.

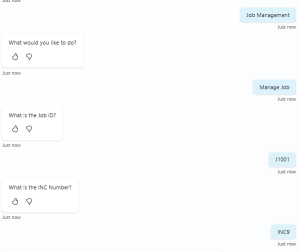

Today, the agent has a set of custom topics that are triggered when specific phrases are used — such as “Record Management” or “Manage Job”. Once a topic is triggered, the agent asks the user what they would like to do, presenting a structured list of available actions. The screenshot below shows this topic in action, reproduced to protect live data.

Once the user makes their selection, the agent follows the corresponding path and asks a follow-up question to capture the record number. That value is saved as a variable and passed directly into an Agent Flow in Power Automate.

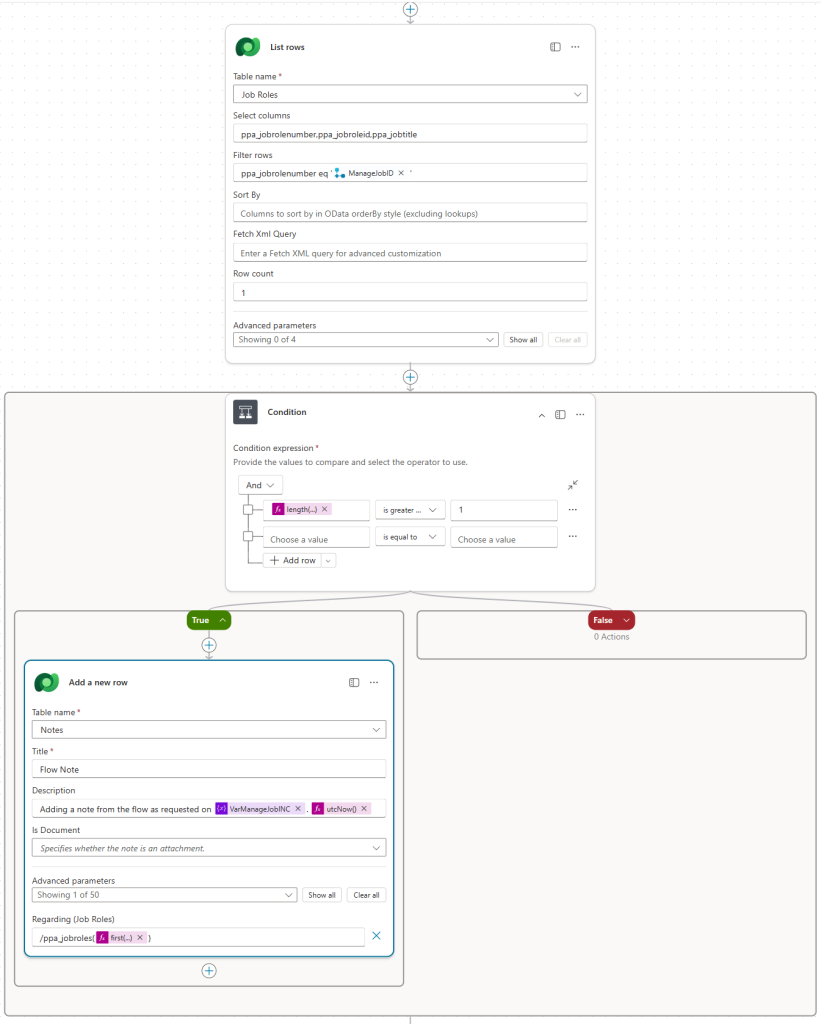

This is where the real work happens. The flow first validates that the record exists in Dataverse. If it does, it carries out the requested changes, writes an audit entry to the record’s timeline with a clear description of what was done and why, and returns a response back to the agent. The agent then confirms the outcome to the user — success if the record was found and updated, or a clear failure message if it wasn’t. The screenshot below shows the flow structure, again reproduced to protect live data.

The CoPilot Studio Topic

The topic is triggered when the user says certain phrases — in the mockup reproduced here, those phrases are “Job Management” and “Manage Job”. Once triggered, the agent presents the user with a set of multiple-choice options. Depending on the selection, it follows that specific path and set of actions — in this mockup, the option chosen is “Manage Job”.

Once the choice has been made, the topic asks two follow-up questions:

- What is the Job ID?

- What is the INC Number?

We’ll come back to why that second question matters shortly. With both answers captured, they are passed to the Power Automate flow as variables.

The Dataverse Record Update

With the variables received from the agent, the first step in the flow is to initialise them. This gives us much greater flexibility in how we work with the data downstream, and in my production environment I also initialise a Message variable at this stage — more on that shortly.

With the variables initialised, the flow checks whether the record provided by the user actually exists, using the List Rows action against the relevant Dataverse table. This is the first layer of governance — we only want to act on records we can confirm exist, and we want to remove any possibility of an error caused by an incorrect ID being passed in.

If no matching record is found, the flow follows the False path and sets the Message variable to inform the user that the record could not be found. If the record does exist, it follows the True path and proceeds.

With the record confirmed, the flow updates it as instructed. It’s worth noting that in a production environment, auditing should be enabled on any entity you’re modifying — if it is, the changes made here will appear in the audit trail. However, the audit trail alone doesn’t tell you why the change was made, or who originally requested it — particularly relevant when changes are being executed by a Service Account rather than an individual user. That’s exactly the gap the next step addresses.

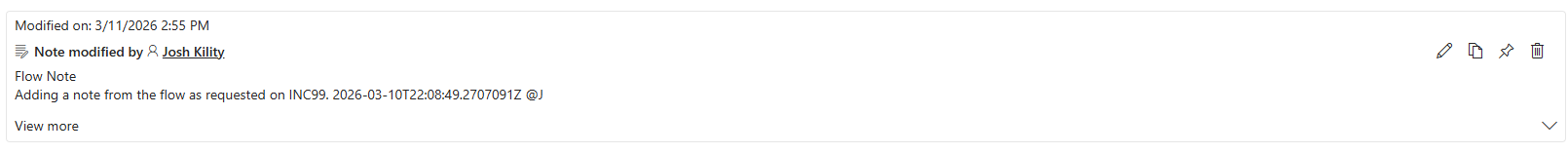

The Timeline Audit Entry

A record timeline is designed to capture everything that has happened with that record. Take a support case as an example — the timeline might contain the original inbound email that opened the case, notes from calls made to other teams, referrals, and the final response sent back to the customer. It’s the full story of that record in one place.

Adding an entry to that timeline from the flow was therefore the natural way to close the governance loop — and following thorough testing in a reproduced environment, this action has now been implemented in the production solution. Every record management action taken through the agent is recorded directly on the record’s timeline, capturing what was changed, when, and critically — why.

Because a timeline isn’t a Dataverse entity in its own right, the way to write to it is by creating a Note record against the Annotation entity. The Annotation entity is a Polymorphic Table (You can read more about Polymorphic Table’s on Microsoft Learn), meaning it can be related to multiple different entity types — which makes configuring this action slightly more involved, but entirely achievable.

As shown in the screenshot, the Regarding field of the Note references the table of the record we’ve just modified (in this mockup, /ppa_jobroles), followed by a reference to the specific record — identified as the first result returned by the earlier List Rows action.

Now — remember that second question the agent asked after the action was selected? “What is the INC Number?” That variable is placed into the description of the Note. In the mockup, the description reads:

“Adding a note from the flow as requested on [VarManageJobINC] [UTCNow]”

VarManageJobINC = the incident reference number provided by the user · UTCNow = the timestamp of the action

This is what completes the governance piece. If anyone questions why a record was changed, there is a clear reference number on the timeline that can be traced back to whoever raised the request and the reason it was made — all without any manual effort from the person who actioned it.

With the timeline entry written, the flow sets the Message variable to confirm the action was completed successfully. That message is passed back to the agent, which relays it directly to the user.

The mockup reproduced on this page demonstrates a single topic with one available action — built this way intentionally to illustrate the pattern clearly without exposing live data. In practice, the production agent already runs three custom topics, each covering a different Dataverse record type and containing multiple actions that carry out specific tasks against those records.

That’s the strength of the structure. Once the pattern is established — conversational trigger, structured data capture, a validated Dataverse action, and a transparent audit entry on the record’s timeline — extending it to cover new record types and new tasks is straightforward. Each additional topic follows the same proven approach, meaning the effort to expand the agent remains low as requirements grow.

It’s also worth acknowledging something that often comes up in conversations about AI agents: could this be fully autonomous? Technically, yes — the agent could be configured to take actions without any human prompt. But that’s a deliberate choice I’ve moved away from. Every action this agent takes is initiated by a human. The agent doesn’t act on its own judgement — it acts on an explicit instruction, captures who gave it, and records it. That’s not a limitation of the technology. It’s a governance decision, and the right one. In environments where compliance, oversight, and accountability matter, keeping a human in the loop isn’t optional — it’s essential.

If there’s one thing that has shaped this agent more than any technical decision, it’s feedback. From the moment the original knowledge agent went live, the team’s reactions — what worked, what was confusing, what they wished it could do — directly influenced every development since. New topics were added for two reasons: to give the team greater capability and independence, reducing the time spent waiting on others to carry out tasks for them, and because the feedback they provided made clear exactly where those additions would have the most impact. That feedback loop is something I’d encourage anyone building a Copilot agent to treat as seriously as the build itself. The technology enables the solution, but the people using it define what that solution should actually be.

The other major takeaway is the value of getting the blueprint right before scaling. The first custom topic was the hardest — not because it was technically complex, but because every decision made there became the standard for everything that followed. How variables are named and initialised, how the record validation is structured, how the timeline entry is written, how the response is passed back to the agent. Once that pattern was proven end-to-end, building the second and third topics was significantly faster. The framework was already there — it just needed applying to a new record type. That’s a meaningful efficiency gain, and it compounds as the agent grows.

What this case study ultimately demonstrates isn’t just what Copilot Studio and Power Automate can do in isolation — it’s what’s possible when they’re combined thoughtfully, with a clear business problem at the centre. The agent started as a way to reduce individual dependency and answer questions faster. It’s grown into a governed, auditable, conversational interface for managing live Dataverse records — one that the team actually use, that leadership can trust, and that continues to evolve based on real feedback from real people.

That, for me, is what good Power Platform development looks like.

Technologies Used

Got a Project in Mind?

If you're looking to build something similar — or want to explore what Power Platform and Copilot Studio could do for your organisation — I'd love to hear from you.

Let's Talk